Is ChatGPT Safe To Use in Daily Life?

ALL TOPICS

- Desktop Web Blocking

- Web Blocking Apps

- Website Blocking Tips

Nov 19, 2024 Filed to: App Review Proven solutions

Safety questions are raised as more individuals use ChatGPT, developed by OpenAI. It is to converse with answers from an AI-based platform.

This article examines the safety of ChatGPT, including its aim and informational sources. As well as why some schools have banned it and OpenAI's risk management techniques. At last, the value of human supervision and intervention will discuss.

We also offer a bonus suggestion on protecting kids from risky apps by utilizing FamiSafe. Without taking any moment, let’s dive directly into this!

ChatGPT Use in a Safe Way Guide

Part 1. What is the purpose of ChatGPT?

OpenAI developed the conversational AI language model known as ChatGPT. It analyses text data using machine-learning techniques to discover patterns in language usage. The main reasons to utilize this software are as follows:

1. Conversational AI

ChatGPT can interact with people and provide replies in natural language to questions. This makes it a potent tool for online education and customer support applications. As well as for others that need natural language interaction.

2. A Variety of Subjects

It gets its training data from the internet and can access a vast knowledge base on various topics. With ChatGPT, users may ask questions about any subject and get a natural language answer.

3. Text Completion

Users can create text on topics using ChatGPT to generate natural language replies. This has several applications, including helping with writing and content development.

4. Efficiency

ChatGPT is more effective and affordable than human customer care representatives. Users can rapidly obtain the information they want. Since it can manage numerous discussions instantly and generate replies instantaneously.

After checking the purpose of ChatGPT to use, we should know about the sources. So that we can evaluate its safety measures. Read more to get its information sources!

Part 2. Where does ChatGPT get its information?

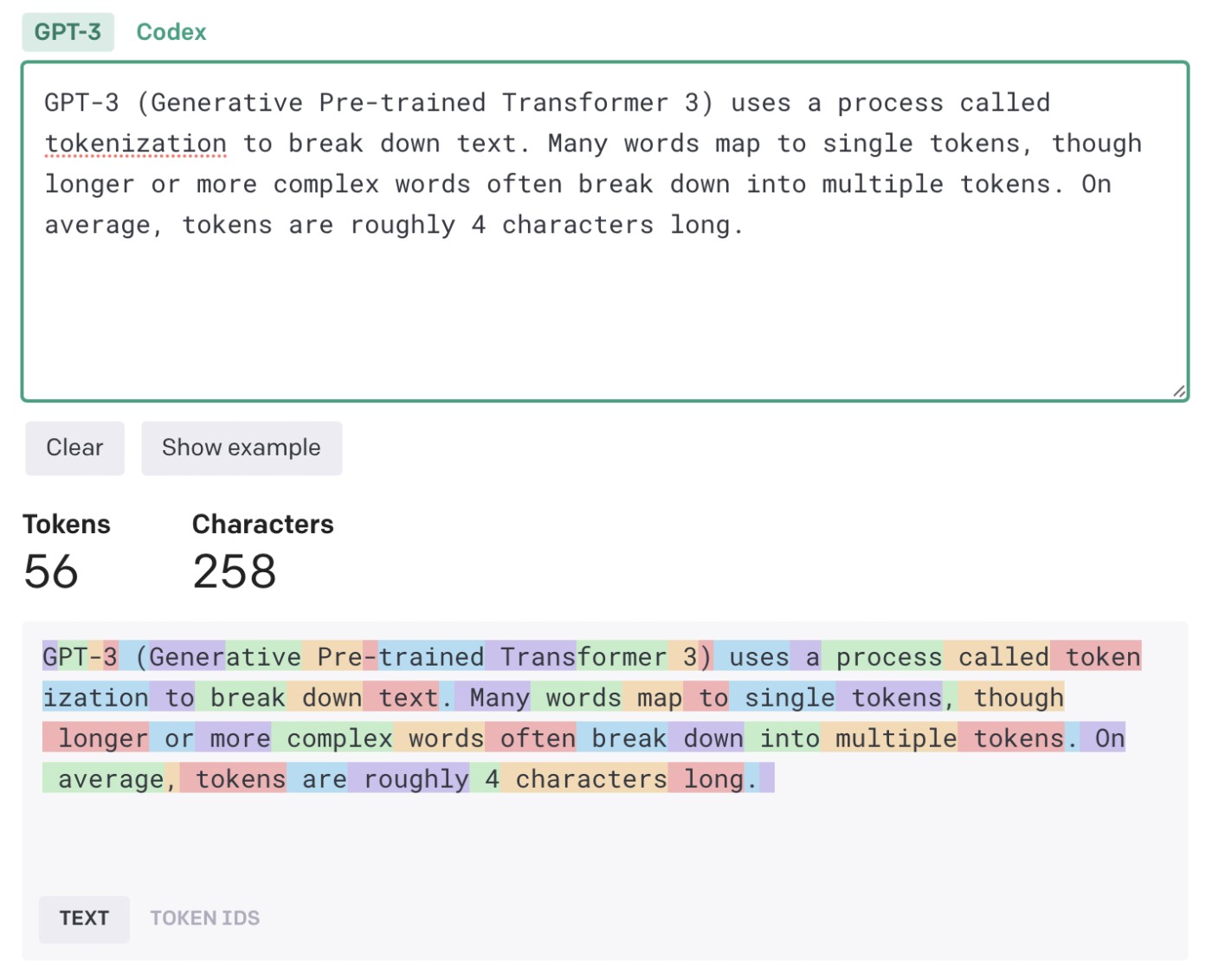

ChatGPT derives its information from an enormous text dataset known as a Corpus. This Corpus was compiled from a variety of online sources, including books, essays, websites, and other written works.

How is Corpus used?

The Corpus is used to teach ChatGPT to recognize linguistic patterns. As well as assist in producing natural language answers to questions and prompts.

ChatGPT uses deep learning methods throughout the training phase. It is, therefore, to examine and discover patterns in the text input. This enables it to understand the context. As well as comprehend the meaning of words and phrases, and produce relevant replies.

What does ChatGPT do after removing duplicates?

The training data is preprocessed to eliminate duplicates and unrelated information. While the remaining data is arranged into tokens.

Tokens are collections of words or phrases. With them, ChatGPT is trained to recognize patterns between words in natural language.

Note: ChatGPT gathers its data from a sizable text dataset obtained from the internet. Then utilizes it to improve its natural language processing abilities.

As a result, ChatGPT can comprehend a variety of subjects. As well as produce natural language replies to user inquiries and suggestions.

Part 3. Why has ChatGPT been banned in some schools?

Several schools have banned ChatGPT and other AI-powered chatbot students for safety. The usage of ChatGPT has been prohibited in schools and school districts in the US, Australia, and India.

These are some justifications:

1. Improper interactions and Cyberbullying

Students are sharing personal information or having improper interactions through ChatGPT. It might result in cyberbullying or other dangerous circumstances, which is a worry.

Schools have a duty to safeguard kids from these kinds of threats. And the usage of ChatGPT may be viewed as posing a risk to that responsibility.

2. Cheating is possible

Concerns about ChatGPT enabling cheating on exams or homework assignments have also been raised. Students may use ChatGPT to finish homework or discover solutions. Since it can produce natural language responses to requests.

3. Possibility for misleading or incorrect information

Another issue is the possibility for ChatGPT to offer biased or false information. As there is training on a sizable text dataset. ChatGPT still runs the danger of producing incorrect or unsuitable answers for a classroom.

Note: This might result in pupils being given false information, which would harm their education.

4. Legal and moral considerations

To permit the use of ChatGPT, schools must also take into account legal and ethical issues. They include laws governing data privacy and the obligation to offer all students a safe learning environment.

Note: The choice to prohibit ChatGPT in schools is difficult. Because it requires a serious analysis of student safety and legal concerns.

As chatbots powered by AI have the potential to be helpful teaching tools. Thus, schools must weigh their potential advantages against any possible threats. Those are what they may represent to kids.

As parents want to take an eye on their children due to privacy concerns or prevent cyberbullying. Then they must go with the software as given below!

You May Also Like:

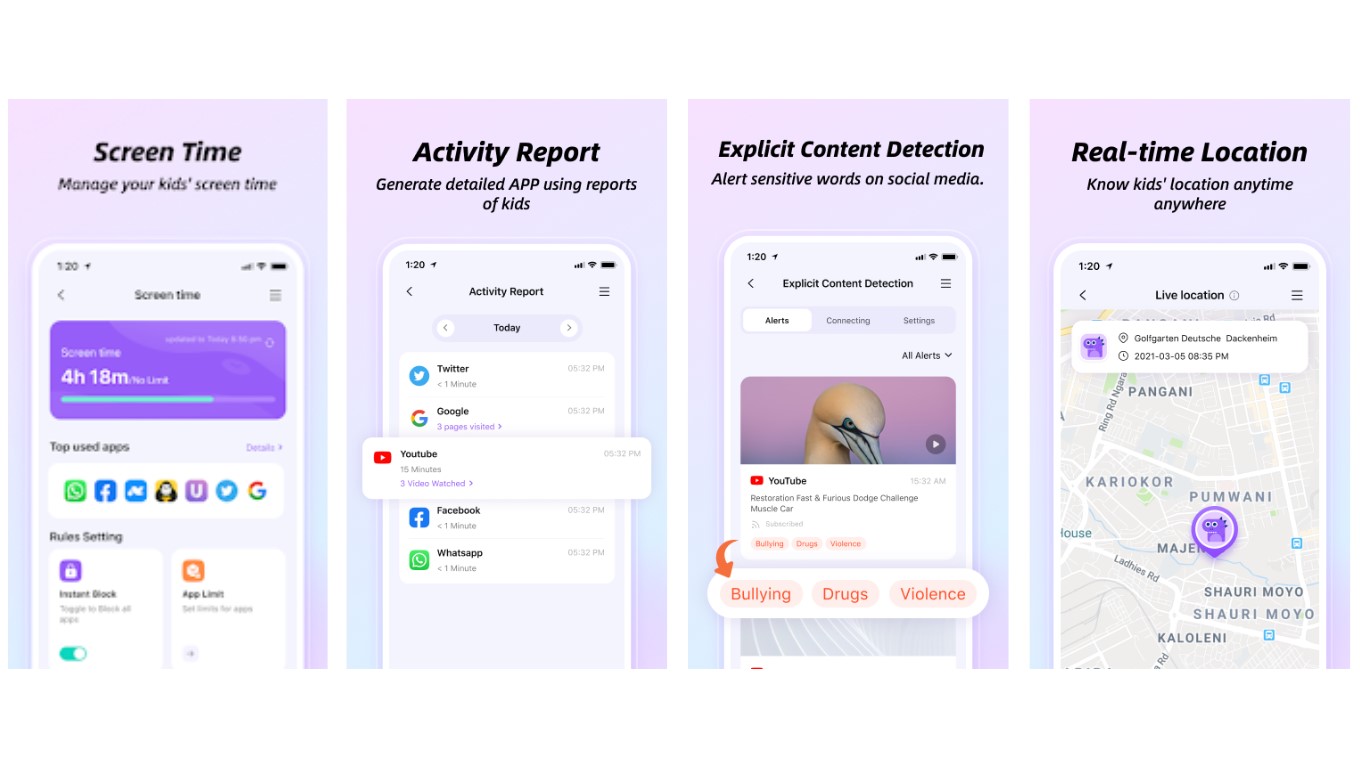

Part 4. Safety regulations for AI systems- FamiSafe

Parents can use FamiSafe to control their kids' use of ChatGPT. It prevents the copying of assignments and the use of other online resources. Content filtering is available to restrict access to certain sites. This stops kids from visiting sites with solutions.

- Web Filter & SafeSearch

- Screen Time Limit & Schedule

- Location Tracking & Driving Report

- App Blocker & App Activity Tracker

- YouTube History Monitor & Video Blocker

- Social Media Texts & Porn Images Alerts

- Works on Mac, Windows, Android, iOS, Kindle Fire, Chromebook

Through app blocking, children can prevent from using chatbot applications. Parents can spot problems and take appropriate action by using extensive information on their child's devices.

Key Features of FamiSafe

The key features for the usage of the FamiSafe on children’s device are as follow:

- Allows parents to restrict applications on their child's smartphone. Such as chatbots, to stop their kids from doing inappropriately.

- Provides web content screening to prevent access to questionable websites or search results.

- Geofencing alerts can be set up to notify parents when their child enters or leaves a certain region.

- Parents can track their child's whereabouts in real-time.

- Prevent children from using their gadgets excessively by enabling screen time limitations.

- Parents can monitor their children's app and device usage as well as their browsing history.

- Set up a plan for their child's device use. These include barring particular applications or the internet during set hours.

- Find potentially dangerous words or images on a child's device.

In general, FamiSafe offers parents a full range of options to assist them in protecting their kids online. Parents can rest as they have the tools to secure their children. Thus, thanks to the features of FamiSafe.

Part 5. Safety risk management by ChatGPT

ChatGPT has a number of risk management techniques to guarantee the security and safety of its users. The following are a few ways that ChatGPT controls security risks:

1. Content moderation

Responses from ChatGPT are pre-screened and censored for offensive language and other material, such as swearing. This helps to stop people from having hazardous or improper chats with the chatbot.

2. User Monitoring

Moreover, ChatGPT keeps track of user actions and alerts users about any questionable conduct. Such as efforts to exchange private information or have improper chats. ChatGPT may stop the chat or notify a human moderator to step in if such conduct is noticed.

3. Privacy Protection

ChatGPT does not voluntarily gather any personally identifying data as part of its design to safeguard user privacy. All user data is encrypted and stored securely to avoid unwanted access or data leaks.

4. Robust security mechanisms

ChatGPT uses strong security procedures to guard against unwanted access and hacking attempts. These methods include encryption, firewalls, and routine security audits to find and fix any possible flaws.

With the help of ChatGPT, consumers can interact with the chatbot. While they are on a secure platform that is capable of managing safety hazards.

Part 6. Human supervision and intervention

Although ChatGPT has several risk management techniques in place. These are to guarantee user safety, human oversight, and intervention are still crucial parts of its safety process. The explanation is as follows:

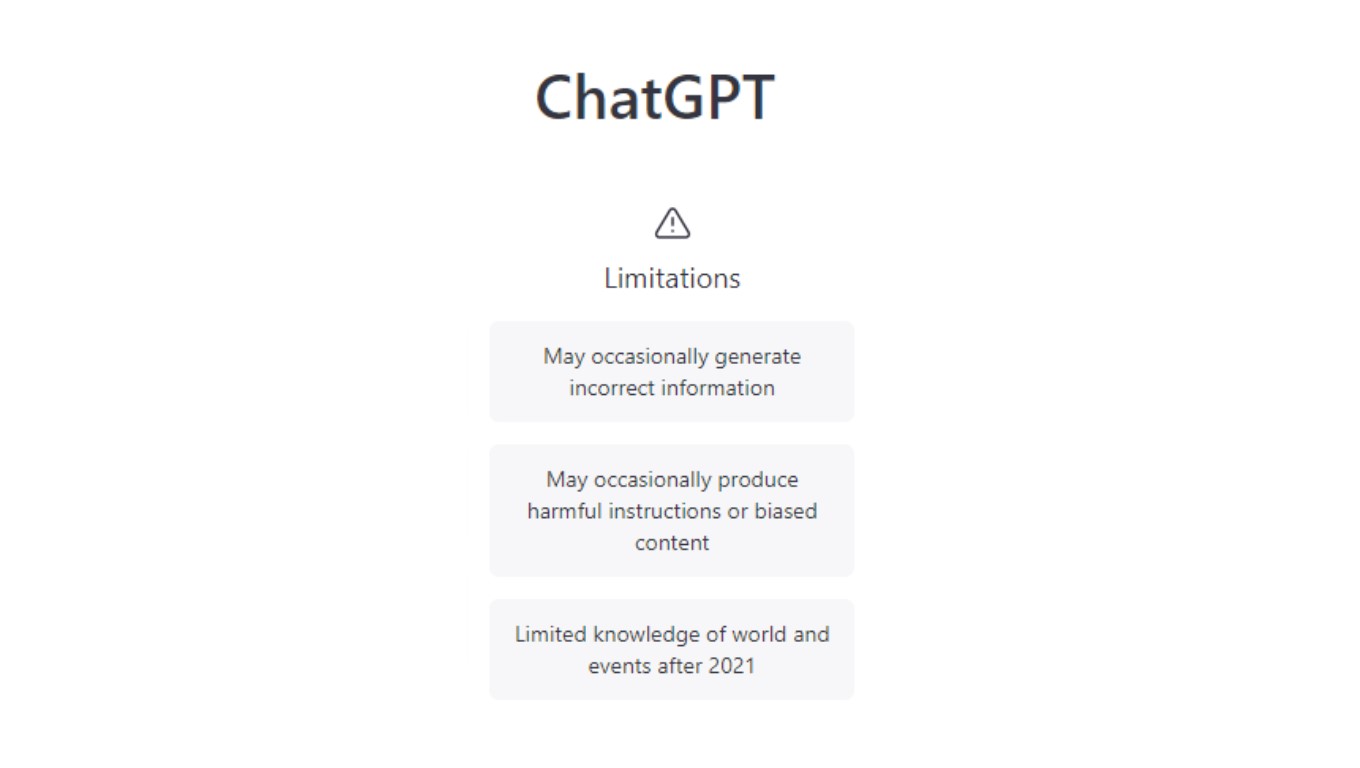

Artificial intelligence (AI) limitations

ChatGPT can create natural language replies to queries, but it still has limits. It might need to comprehend the context of a discussion properly. Because it could result in improper or incorrect replies.

Human oversight is required to ensure that ChatGPT is giving users accurate information.

Managing complex problem

Users occasionally raise complicated or delicate concerns that need human action. A human moderator may need to intervene and offer resources or help. For instance, if a user displays suicidal thoughts or encounters bullying.

Handling mistakes and exceptions

Despite risk management techniques, exceptions or errors may require human involvement. A human moderator can help with technical issues or complaints. This resolves problems for the user.

Note: To overcome issues, ChatGPT can use AI and human moderation. This combination offers the best user experience.

As human moderators can offer more individualized help and intervention as needed. Thus, AI-powered chatbots can undertake everyday chores and deliver basic information.

Conclusion

ChatGPT is a strong language model. It helps students with assignments and general knowledge. Yet, it's critical to be aware of the dangers of employing a chatbot to finish homework tasks.

By monitoring their child's online behavior, parents can assist in reducing these hazards through FamiSafe. Parents can use this tool to give their kids access to a secure internet environment.

FemiSafe helps in content screening, app banning, location monitoring, and screen time management. Remember that it's up to the individual to make decisions and use these tools responsibly.

Thomas Jones

chief Editor